Personal access tokens (PATs) are an alternative to using passwords for authentication to GitHub when using the GitHub API or the command line. Previous DAG-based schedulers like Oozie and Azkaban tended to rely on multiple configuration files and file system trees to create a DAG, whereas in Airflow, DAGs can often be written in one Python file. hourly or daily) or based on external event triggers (e.g. That includes 46 new features, 39 improvements, 52 bug fixes, and several documentation changes.

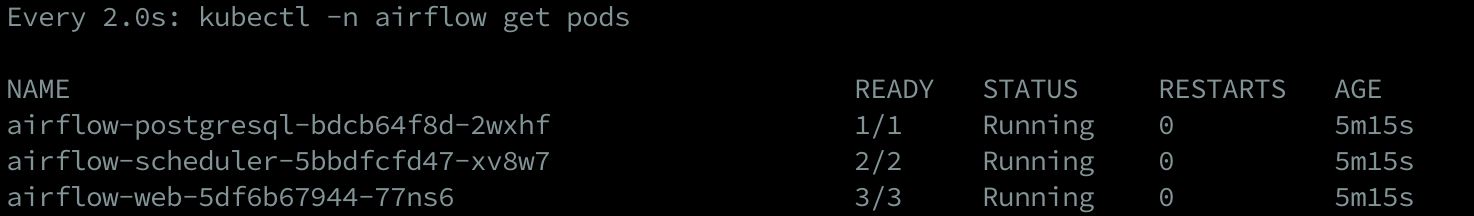

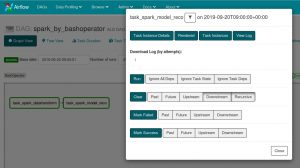

DAGs can be run either on a defined schedule (e.g. Apache Airflow 2.4.0 contains over 650 user-facing commits (excluding commits to providers or chart) and over 870 total. Airflow is written in Python, and workflows are created via Python scripts. Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers. Tasks and dependencies are defined in Python and then Airflow manages the scheduling and execution. Apache Airflow is an open-source workflow management platform created by the community to programmatically author, schedule, and monitor workflows. While other “configuration as code” workflow platforms exist using markup languages like XML, using Python allows developers to import libraries and classes to help them create their workflows.Īirflow uses directed acyclic graphs (DAGs) to manage workflow orchestration. Airflow is designed under the principle of “configuration as code”. You can also get Airflow configurations with sensitive data from the Secrets Backend. Airflow handles getting the code into the container and returning xcom - you just worry about your function. The task.docker decorator is one such decorator that allows you to run a function in a docker container. If you use an alternative secrets backend, check inside your backend to view the values of your variables and connections. Airflow 2.2.0 allows providers to create custom task decorators in the TaskFlow interface.

Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers.Īirflow is written in Python, and workflows are created via Python scripts. To use GHE authentication, you must install Airflow with the githubenterprise extras group: pip install 'apache-airflow githubenterprise' Setting up GHE Authentication An application must be setup in GHE before you can use the GHE authentication backend. The Airflow UI only shows connections and variables stored in the Metadata DB and not via any other method. When workflows are defined as code, they become. This technical walkthrough will show you how to authorize GitHub OAuth with Apache Airflow, step-by-step.Īpache Airflow is an open-source workflow management platform created by the community to programmatically author, schedule, and monitor workflows. Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed